UCSF Library Archives and Special Collections has 2 new internship opportunities.

Archives Intern for AIDS History

The San Francisco Bay Area’s Response to the AIDS Epidemic: Digitizing, Reuniting and Providing Universal Access to Historical AIDS Records.

The Archives Intern for AIDS History will be assigned various tasks to assist in completion of the project including performing Quality Control checks on digitized papers, digital objects and metadata. Candidate should be a student or recent graduate from a library or information science program, preferably with a concentration or interest in archives and special collections. Students of public history, and history of health sciences are also encouraged to apply. This is a part time temporary appointment.

Department: Archives and Special Collections

Rank and Salary: Library Intern – $15/hr

Term: 150 hours Fall 2018 – Spring 2019

Project Description

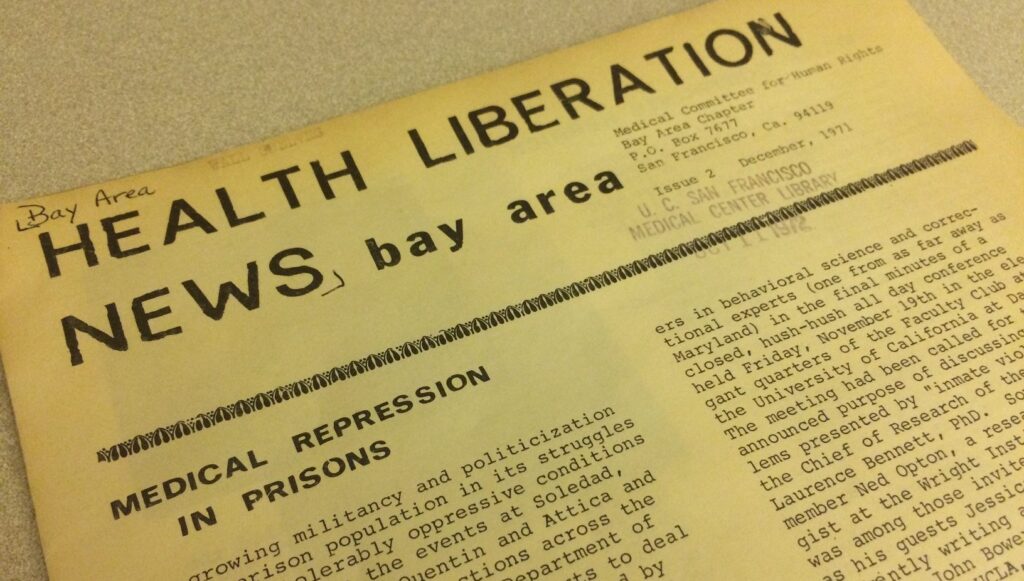

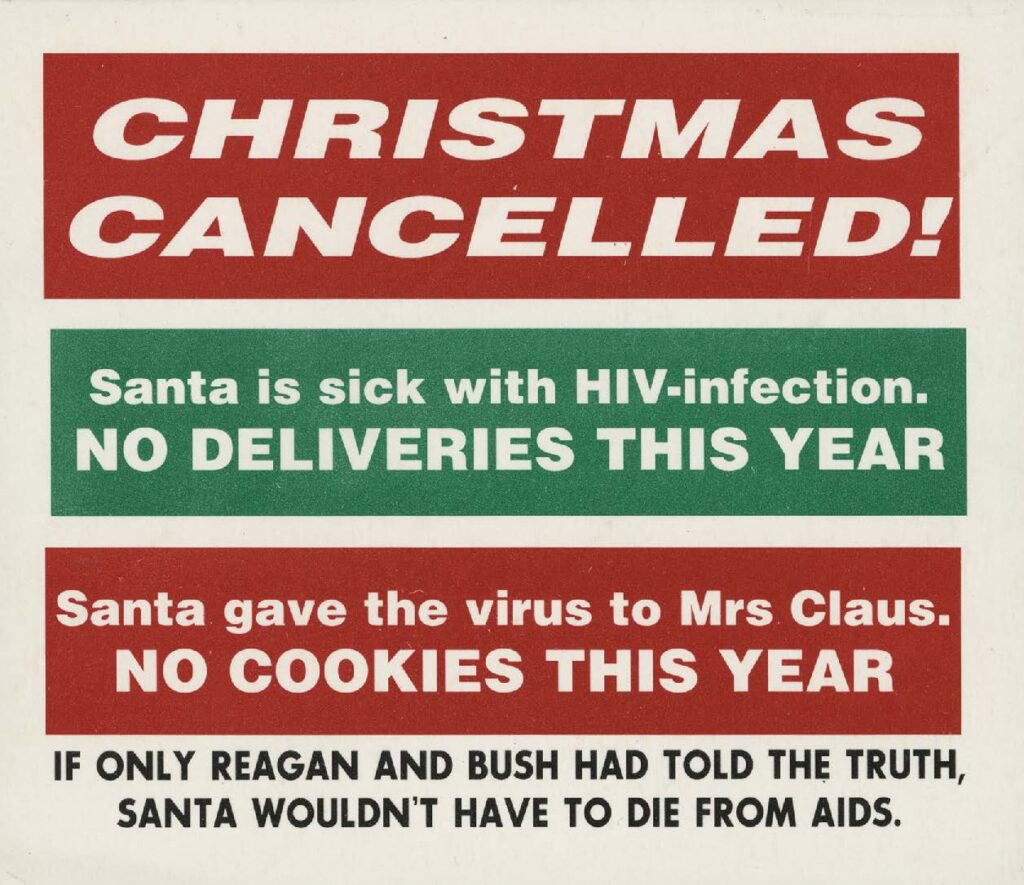

The Archives and Special Collections department of the University of California, San Francisco (UCSF) Library, in collaboration with the San Francisco Public Library (SFPL) and the Gay, Lesbian, Bisexual, Transgender (GLBT) Historical Society, has been awarded a $315,000 implementation grant from the National Endowment for the Humanities. The collaborating institutions will digitize about 127,000 pages from 49 archival collections related to the early days of the AIDS epidemic in the San Francisco Bay Area and make them widely accessible to the public online. In the process, collections whose components had been placed in different archives for various reasons will be digitally reunited, facilitating access for researchers outside the Bay Area.

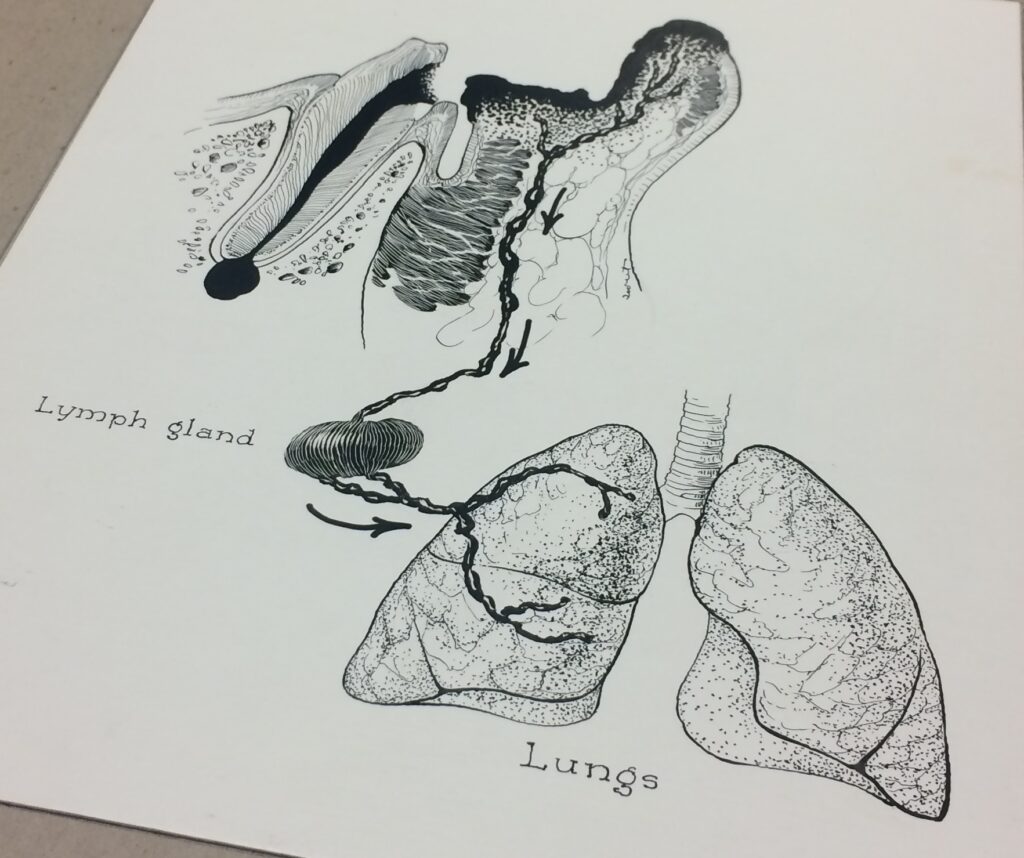

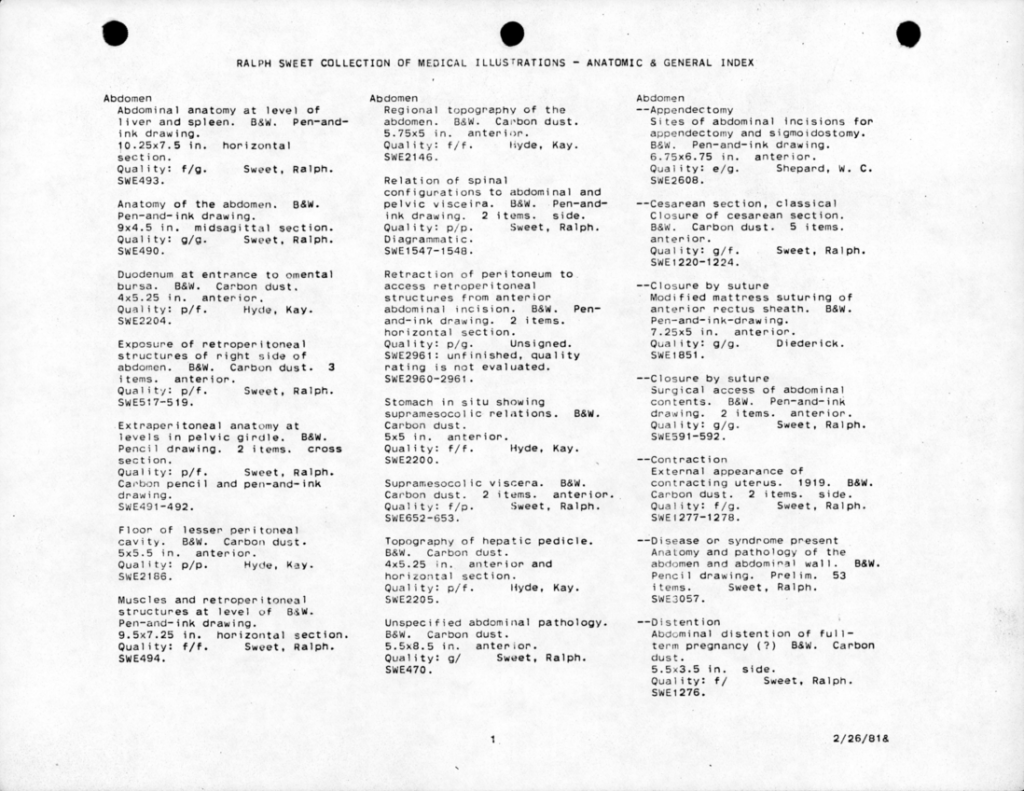

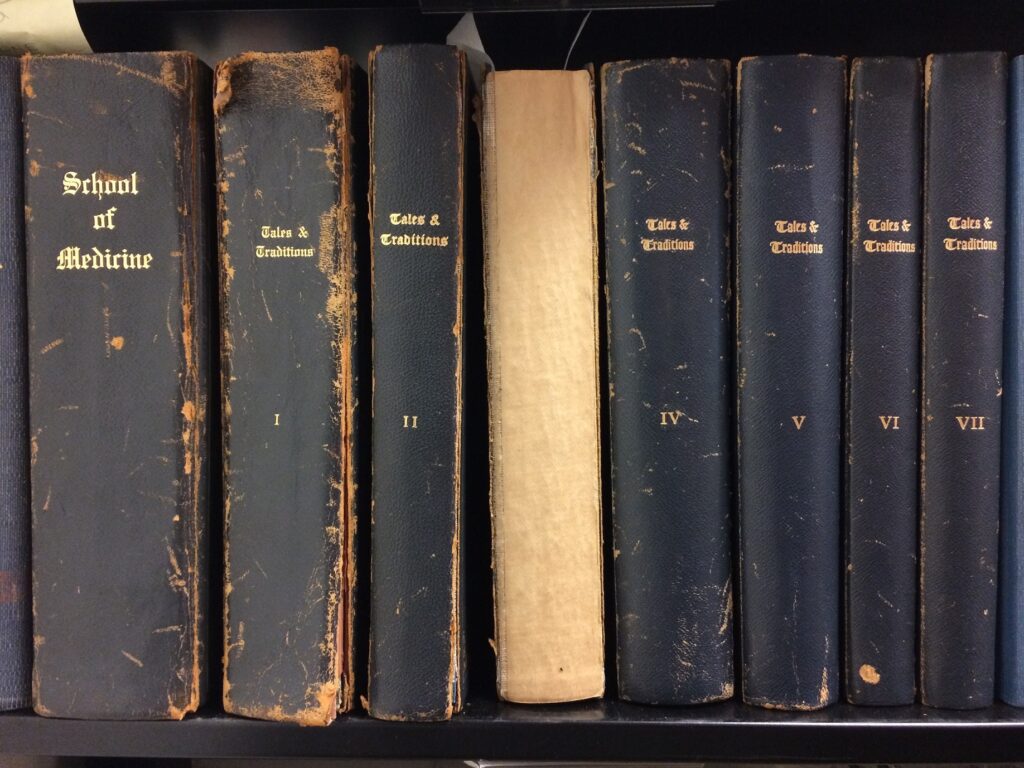

The 127,000 pages from the three archives range from handwritten correspondence and notebooks to typed reports and agency records to printed magazines. Also included are photographic prints, negatives, transparencies, and posters. The materials will be digitized by the University of California, Merced Library’s Digital Assets Unit, which has established a reputation for digitizing information resources so that they can be made available to the world via the web. All items selected for digitization will be carefully examined to address any privacy concerns. The digital files generated by this project will be disseminated broadly through the California Digital Library, with the objects freely accessible to the public through both Calisphere, operated by the University of California, and the Digital Public Library of America, which will have an AIDS history primary sources set.

Skills and experience desired:

- Strong candidates will be detail oriented and possess excellent organizational skills

- Proficiency with MS Excel and Google spreadsheets

- Proficiency with document sharing and cloud computing services (Google drive, Box)

- Experience with digital asset management systems

- Ability to work independently

- Ability to lift boxes weighing up to 40 pounds.

Hours and Location:

The timing of the internship is flexible, but should be carried out during the Fall of 2018 and ending early Spring 2019, based on applicant and institutional commitments. Up to two 8-hour days per week for 10-12 weeks. Work will be performed onsite at the library, though offsite work is possible.

Stipend:

A stipend of $15/hour is available for the internship.

To Apply:

Applications for the UCSF Archives & Special Collections Internship, including a cover letter, resume, and names/contact info of two references should be sent to

David Krah, Project Archivist

UCSF Archives and Special Collections

University of California, San Francisco

530 Parnassus Avenue

San Francisco, CA 94143-0840

Digital Processing and Implementation Intern

The Digital Processing and Implementation Intern will assist the UCSF Digital Archivist with various aspects of the Digital Archives program as they are implemented and brought online for the first time. Potential projects include:

- Testing digital forensics and processing hardware and software being implemented in the digital forensics lab.

- Compiling inventory of physical archival collections containing digital media, and pulling collections and identifying, counting, and cataloging digital media present.

- Disk-imaging digital media removed from collections and transferring data to library storage systems.

- Creating metadata about digital media being processed in digital forensics lab, editing metadata for various digitization or cataloging projects.

- Operating scanning equipment to digitize archival collections for patron and researcher use.

- Processing digital collections under the supervision of the Digital Archivist, including finding aid and container list creation and manipulation of access copies of born-digital content to create access-ready versions of collection.

- Researching computer tools and systems for management and preservation of digital objects, and compiling and reporting on capabilities, requirements, dependencies, etc. of these utilities.

- Participate in staff meetings, assist with writing blog posts, and help with reference/duplication requests.

Department: Archives and Special Collections

Rank and Salary: Library Intern – $15/hr

Term: 150 – 200 hours Fall 2018 – Spring 2019

Location

UCSF Library and Center for Knowledge Management,

530 Parnassus Avenue, San Francisco, CA 94143-0840

Work Type

Archival Processing, Information Technology, Computer Science

Work To Be Done

On site, with occasional opportunities to work from home or other location

Desired Qualifications

- Experience with ArchivesSpace, Nuxeo or other archival collections management software

- Experience with or interest in digital preservation, digital file formats and media, computer science, or history of computing technologies

- Experience with or interest in digital forensics in archival collections and various digital forensics tools, such as FTK Imager and BitCurator

- Familiarity with scripting, computer programming in any language, Unix.

- Excellent analytical and writing skills

- High level of accuracy and attention to detail

- Ability to work independently

- Ability to lift boxes weighing up to 40 pounds

Stipend

A stipend of $15/hour is available for the internship. The internship is intended for those who are currently enrolled in an undergraduate/graduate program.

Hours

Up to two 8-hour days per week for 10-12 weeks. Specific on-site hours are negotiable, but must be completed between 8:00 a.m. and 5:00 pm Monday through Friday. Start and end dates are flexible.

Application Process

Please submit a letter of interest, a current resume and contact information for two professional references to:

Charles Macquarie

Digital Archivist

UCSF Archives and Special Collections

University of California, San Francisco

530 Parnassus Avenue

San Francisco, CA 94143-0840

The UCSF Library is committed to a culture of inclusion and respect. We embrace diversity of thought, experience, and people as a source of strength which is critical to our success. We encourage candidates to apply who thrive in an environment which celebrates and serves our diverse communities.

Equal Employment Opportunity

The University of California San Francisco is an Equal Opportunity/Affirmative Action Employer. All qualified applicants will receive consideration for employment without regard to race, color, religion, sex, sexual orientation, gender identity, national origin, age, protected veteran or disabled status, or genetic information.

About UCSF

The University of California, San Francisco (UCSF) is a leading university dedicated to promoting health worldwide through advanced biomedical research, graduate-level education in the life sciences and health professions, and excellence in patient care. It is the only campus in the 10-campus UC system dedicated exclusively to the health sciences.

About UCSF Archives and Special Collections

UCSF Archives & Special Collections is a dynamic health sciences research center that contributes to innovative scholarship, actively engages users through educational activities, preserves past knowledge, enables collaborative research experiences to address contemporary challenges, and translates scientific research into patient care.